Self-Supervised Learning via

Conditional Motion Propagation

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (

CVPR) 2019

Abstract

Intelligent agent naturally learns from motion. Various self-supervised algorithms have leveraged motion cues to learn effective visual representations. The hurdle here is that motion is both ambiguous and complex, rendering previous works either suffer from degraded learning efficacy, or resort to strong assumptions on object motions. In this work, we design a new learning-from-motion paradigm to bridge these gaps. Instead of explicitly modeling the motion probabilities, we design the pretext task as a conditional motion propagation problem. Given an input image and several sparse flow guidance vectors on it, our framework seeks to recover the full-image motion. Compared to other alternatives, our framework has several appealing properties: (1) Using sparse flow guidance during training resolves the inherent motion ambiguity, and thus easing feature learning. (2) Solving the pretext task of conditional motion propagation encourages the emergence of kinematically-sound representations that poss greater expressive power. Extensive experiments demonstrate that our framework learns structural and coherent features; and achieves state-of-the-art self-supervision performance on several downstream tasks including semantic segmentation, instance segmentation, and human parsing. Furthermore, our framework is successfully extended to several useful applications such as semi-automatic pixel-level annotation.

Representation Learning

1. Pascal VOC 2012 Semantic Segmentation (AlexNet)

| Method (AlexNet) | Supervision (data amount) | % mIoU |

| Krizhevsky et al. [1] | ImageNet labels (1.3M) | 48.0 |

| Random | - (0) | 19.8 |

| Pathak et al. [2] | In-painting (1.2M) | 29.7 |

| Zhang et al. [3] | Colorization (1.3M) | 35.6 |

| Zhang et al. [4] | Split-Brain (1.3M) | 36.0 |

| Noroozi et al. [5] | Counting (1.3M) | 36.6 |

| Noroozi et al. [6] | Jigsaw (1.3M) | 37.6 |

| Noroozi et al. [7] | Jigsaw++ (1.3M) | 38.1 |

| Jenni et al. [8] | Spot-Artifacts (1.3M) | 38.1 |

| Larsson et al. [9] | Colorization (3.7M) | 38.4 |

| Gidaris et al. [10] | Rotation (1.3M) | 39.1 |

| Pathak et al. [11]* | Motion Segmentation (1.6M) | 39.7 |

| Walker et al. [12]* | Flow Prediction (3.22M) | 40.4 |

| Mundhenk et al. [13] | Context (1.3M) | 40.6 |

| Mahendran et al. [14] | Flow Similarity (1.6M) | 41.4 |

| Ours | CMP (1.26M) | 42.9 |

| Ours | CMP (3.22M) | 44.5 |

| Caron et al. [15] | Clustering (1.3M) | 45.1 |

| Feng et al. [16] | Feature Decoupling (1.3M) | 45.3 |

2. Pascal VOC 2012 Semantic Segmentation (ResNet-50)

| Method (ResNet-50) | Supervision (data amount) | % mIoU |

| Krizhevsky et al. [1] | ImageNet labels (1.2M) | 69.0 |

| Random | - (0) | 42.4 |

| Walker et al. [12]* | Flow Prediction (1.26M) | 54.5 |

| Pathak et al. [11]* | Motion Segmentation (1.6M) | 54.6 |

| Ours | CMP (1.26M) | 59.0 |

3. COCO 2017 Instance Segmentation (ResNet-50)

| Method (ResNet-50) | Supervision (data amount) | Det. (% mAP) | Seg. (% mAP) |

| Krizhevsky et al. [1] | ImageNet labels (1.2M) | 37.2 | 34.1 |

| Random | - (0) | 19.7 | 18.8 |

| Pathak et al. [11]* | Motion Segmentation (1.6M) | 27.7 | 25.8 |

| Walker et al. [12]* | Flow Prediction (1.26M) | 31.5 | 29.2 |

| Ours | CMP (1.26M) | 32.3 | 29.8 |

4. LIP Human Parsing (ResNet-50)

| Method (ResNet-50) | Supervision (data amount) | Single-Person (% mIoU) | Multi-Person (% mIoU) |

| Krizhevsky et al. [1] | ImageNet labels (1.2M) | 42.5 | 55.4 |

| Random | - (0) | 32.5 | 35.0 |

| Pathak et al. [11]* | Motion Segmentation (1.6M) | 36.6 | 50.9 |

| Walker et al. [12]* | Flow Prediction (1.26M) | 36.7 | 52.5 |

| Ours | CMP (1.26M) | 36.9 | 51.8 |

| Ours | CMP (4.57M) | 40.2 | 52.9 |

Note: Methods marked * have not reported the results in their paper, hence we reimplemented them to obtain the results.

References

- Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. Imagenet classification with deep convolutional neural networks. In NeurIPS, 2012.

- Deepak Pathak, Philipp Krahenbuhl, Jeff Donahue, Trevor Darrell, and Alexei A Efros. Context encoders: Feature learning by inpainting. In CVPR, 2016.

- Richard Zhang, Phillip Isola, and Alexei A Efros. Colorful image colorization. In ECCV. Springer, 2016.

- Richard Zhang, Phillip Isola, and Alexei A Efros. Split-brain autoencoders: Unsupervised learning by cross-channel prediction. In CVPR, 2017.

- Mehdi Noroozi, Hamed Pirsiavash, and Paolo Favaro. Representation learning by learning to count. In ICCV, 2017.

- Mehdi Noroozi and Paolo Favaro. Unsupervised learning of visual representations by solving jigsaw puzzles. In ECCV. Springer, 2016.

- Mehdi Noroozi, Ananth Vinjimoor, Paolo Favaro, and Hamed Pirsiavash. Boosting self-supervised learning via knowledge transfer. In CVPR, 2018.

- Simon Jenni and Paolo Favaro. Self-supervised feature learning by learning to spot artifacts. In CVPR, 2018.

- Gustav Larsson, Michael Maire, and Gregory Shakhnarovich. Colorization as a proxy task for visual understanding. In CVPR, 2017.

- Spyros Gidaris, Praveer Singh, and Nikos Komodakis. Unsupervised representation learning by predicting image rotations. In ICLR, 2018.

- Deepak Pathak, Ross B Girshick, Piotr Dollar, Trevor Darrell, and Bharath Hariharan. Learning features by watching objects move. In CVPR, 2017.

- Jacob Walker, Abhinav Gupta, and Martial Hebert. Dense optical flow prediction from a static image. In ICCV, 2015.

- T Nathan Mundhenk, Daniel Ho, and Barry Y Chen. Improvements to context based self-supervised learning. CVPR, 2018.

- A. Mahendran, J. Thewlis, and A. Vedaldi. Cross pixel optical flow similarity for self-supervised learning. In ACCV, 2018.

- Mathilde Caron, Piotr Bojanowski, Armand Joulin, and Matthijs Douze. Deep clustering for unsupervised learning of visual features. In ECCV, 2018.

- Zeyu Feng, Chang Xu, and Dacheng Tao. Self-Supervised Representation Learning by Rotation Feature Decoupling. In CVPR, 2019.

Visualizations

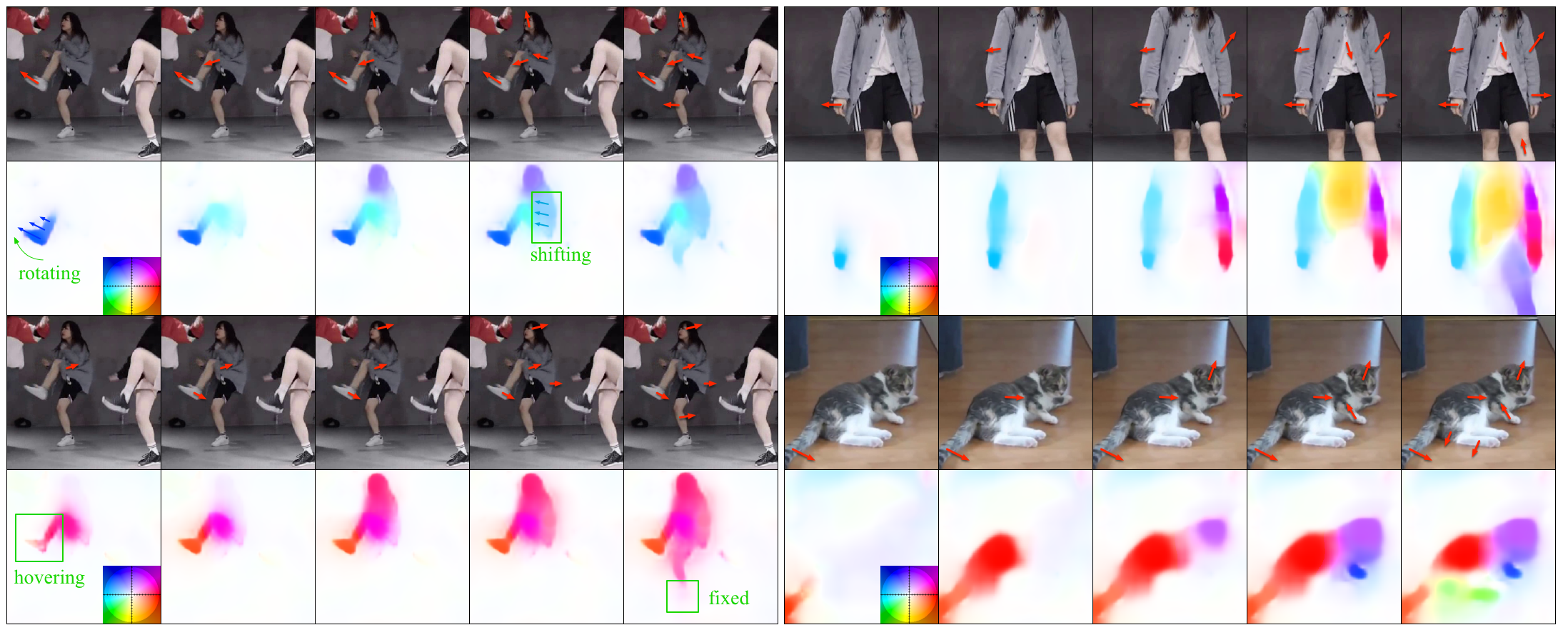

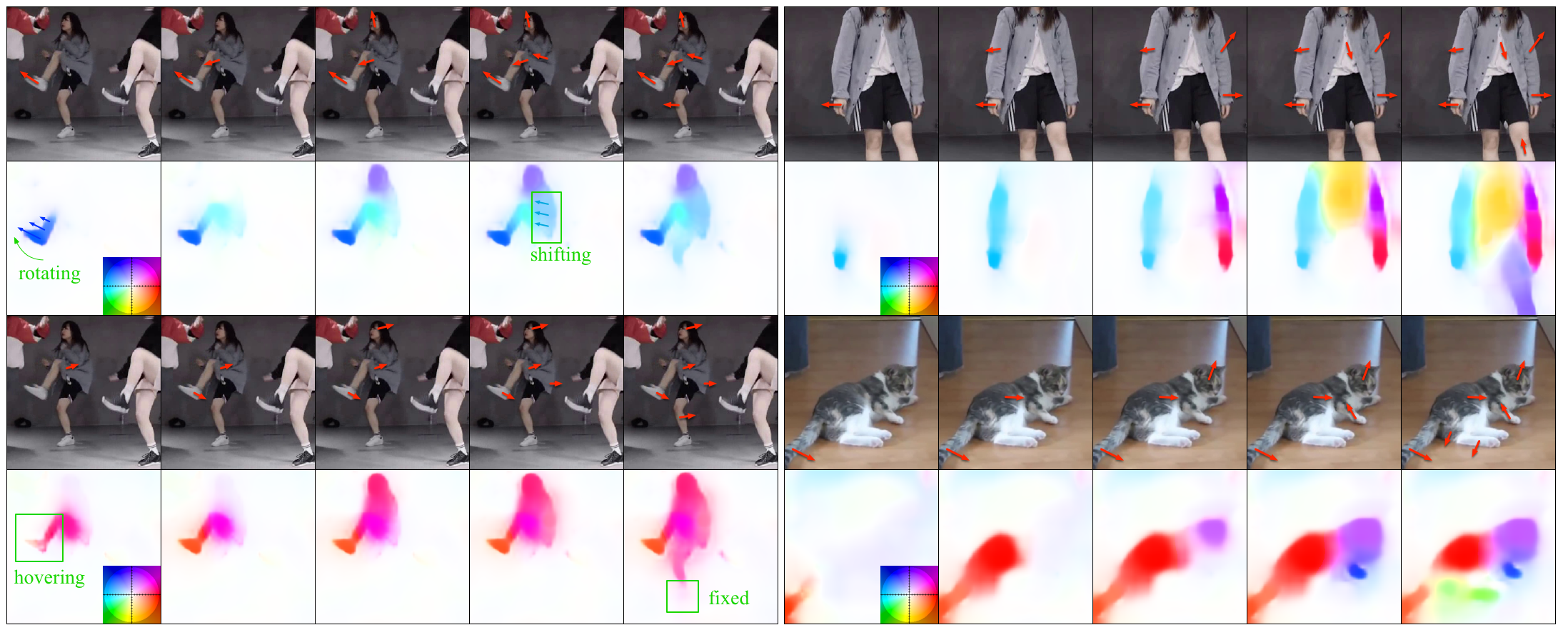

CMP testing results. In each group, the first row includes the original image and the guidance arrows given by users, the second row shows the predicted motion. The results demonstrate three characteristics of CMP: 1. CMP propagates motion on the whole rigid part. 2. CMP can infer whether a part is shifting or rotating (motion uniform if shifting, fading if rotating) as shown in the first group. 3. The results are physically feasible. For example, in the second group, given a single guidance vector on the left thigh, there are also responses on left shank and foot. It is due to the observation that the left leg is hovering. However, in the last column, although given a guidance vector on the right leg, the right foot keeps still because it is on the ground.

Citation

@inproceedings{zhan2019self,

author = {Zhan, Xiaohang and Pan, Xingang and Liu, Ziwei and Lin, Dahua and Loy, Chen Change},

title = {Self-Supervised Learning via Conditional Motion Propagation},

booktitle = {Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR)},

month = {June},

year = {2019}

}