Deep Specialized Network for Illuminant Estimation

Wu Shi1, Chen Change Loy1,2, and Xiaoou Tang1,2

1Department of Informaiton Engineering, The Chinese University of Hong Kong,

2Shenzhen Institutes of Advanced Technology, Chinese Academy of Sciences.

The 14th European Conference on Computer Vision (ECCV) 2016, Amsterdam, The Netherlands

[PDF] [Supplementary Material] [Poster] [Codes]

Abstract

Illuminant estimation to achieve color constancy is an ill-posed problem. Searching the large hypothesis space for an accurate illuminant estimation is hard due to the ambiguities of unknown reflections and local patch appearances. In this work, we propose a novel Deep Specialized Network (DS-Net) that is adaptive to diverse local regions for estimating robust local illuminants. This is achieved through a new convolutional network architecture with two interacting sub-networks, i.e. an hypotheses network (HypNet) and a selection network (SelNet). In particular, HypNet generates multiple illuminant hypotheses that inherently capture different modes of illuminants with its unique two-branch structure. SelNet then adaptively picks for confident estimations from these plausible hypotheses. Extensive experiments on the two largest color constancy benchmark datasets show that the proposed 'hypothesis selection' approach is effective to overcome erroneous estimation. Through the synergy of HypNet and SelNet, our approach outperforms state-of-the-art methods such as [1-3].

Introduction

1. The observed color I is given by

![]()

where E is the RGB illumination and R is the RGB value of reflectance under canonical (often white) illumination. Estimating E from I is underdetermined - both the illuminant and surface colors in an observed image are unknown.

2. Finding a good hypothesis of illuminant becomes harder when there are ambiguities caused by complex interactions of extrinsic factors such as surface reflections and different texture appearances of objects. We argue that it is non-trivial to learn a model that can encompass the large and diverse hypothesis space given limited samples provided during the training stage.

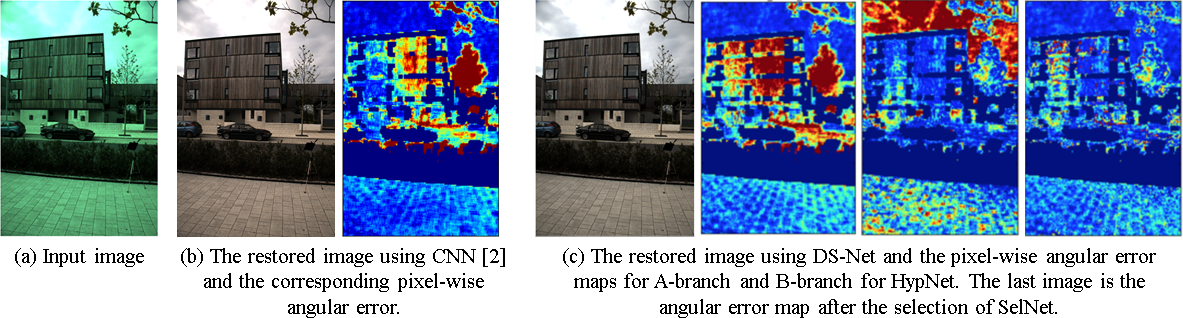

Fig. 1. The proposed DS-Net shows superior performance over existing methods in handling regions with different intrinsic properties, thanks to the unique synergy between the hypotheses network (HypNet) and selection network (SelNet). In this example, the different branches of HypNet provide complementary illuminant estimations based on their specialization. SelNet automatically picks for the optimal estimations and yields a considerably lower angular error compared to that obtained from each respective branch in HypNet, as well as that obtained from CNN [2]. The angular error is the error in the illuminant estimate.

Deep Specialized Network (DS-Net)

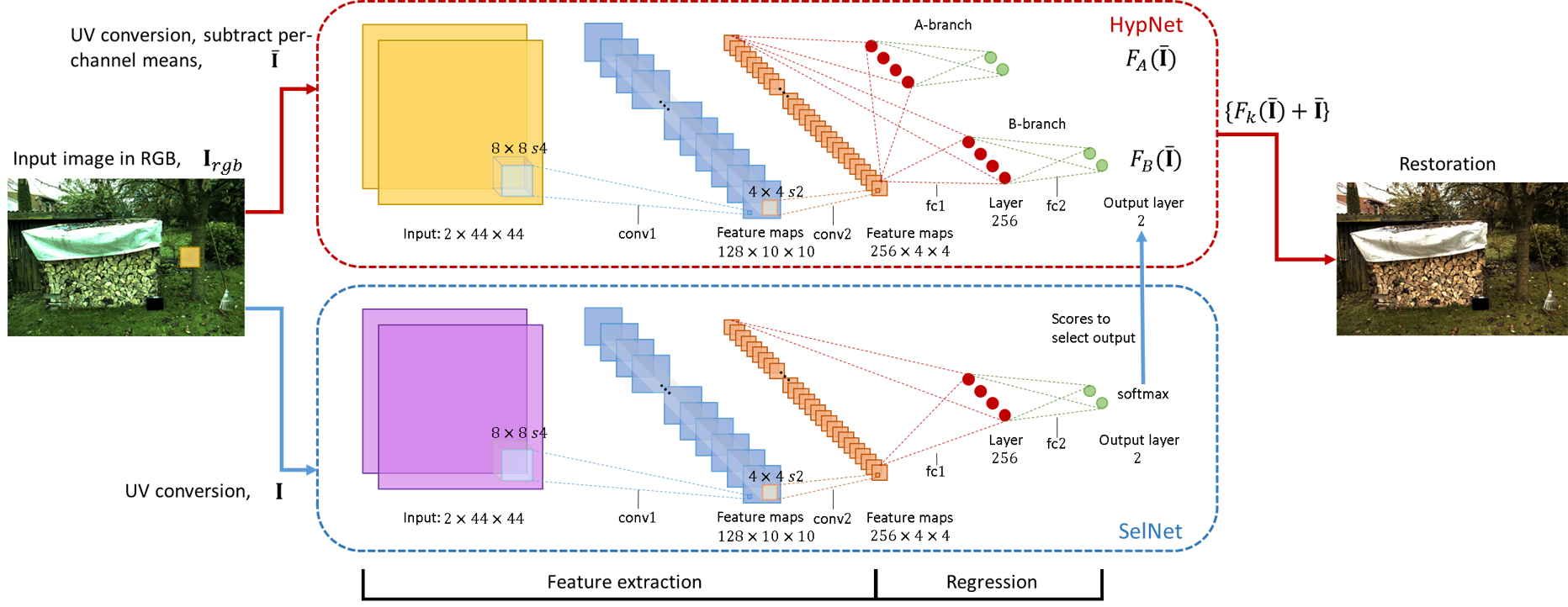

The proposed network, named Deep Specialized Network, consists of two closely coupled sub-networks: a multi-hypotheses network (HypNet) and a hypothesis selection network (SelNet).

HypNet

HypNet learns to map an image patch to multiple hypotheses of illuminant of that patch. This is in contrast to existing network designs that usually provide just a single prediction. In our design, HypNet generates two competing hypotheses for an illuminent estimation of a patch trough two branches that fork from a main CNN body. Each branch of HypNet is trained using a 'winner-take-all' learning strategy to automatically specialize to handle regions of certain appearance. Specifically for each i-th patch, the loss is defined by

![]()

where \tilde{E} is a hypothesis from one branch and E* is the ground truth illumination.

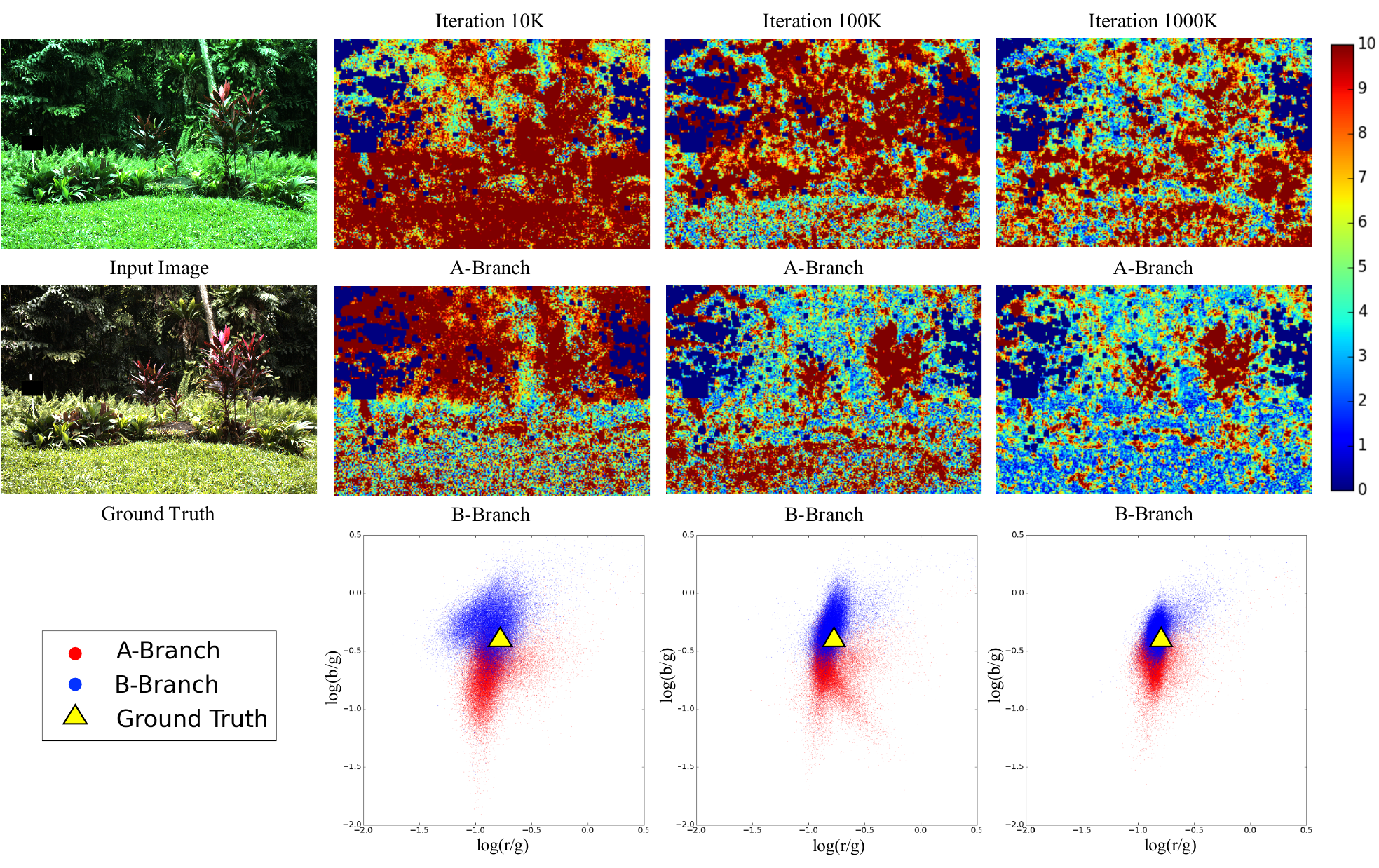

Fig. 2. Learning of two branches of HypNet. The first two rows show the per-pixel angular error map of A/B branch of HypNet, using the models after 10K, 100K and 1000K training iterations. The last row depicts the UV chrominance of per-pixel illumination estimated by the two braches at the corresponding iterations.

SelNet

SelNet makes an vote on the hypotheses produced by HypNet. Specifically, it takes an image patch and generates a score vector to pick the final illuminant hypothesis generated from one of the branches in HypNet. Experiments show that SelNet yields much robust final predictions than simply averaging the hypotheses.

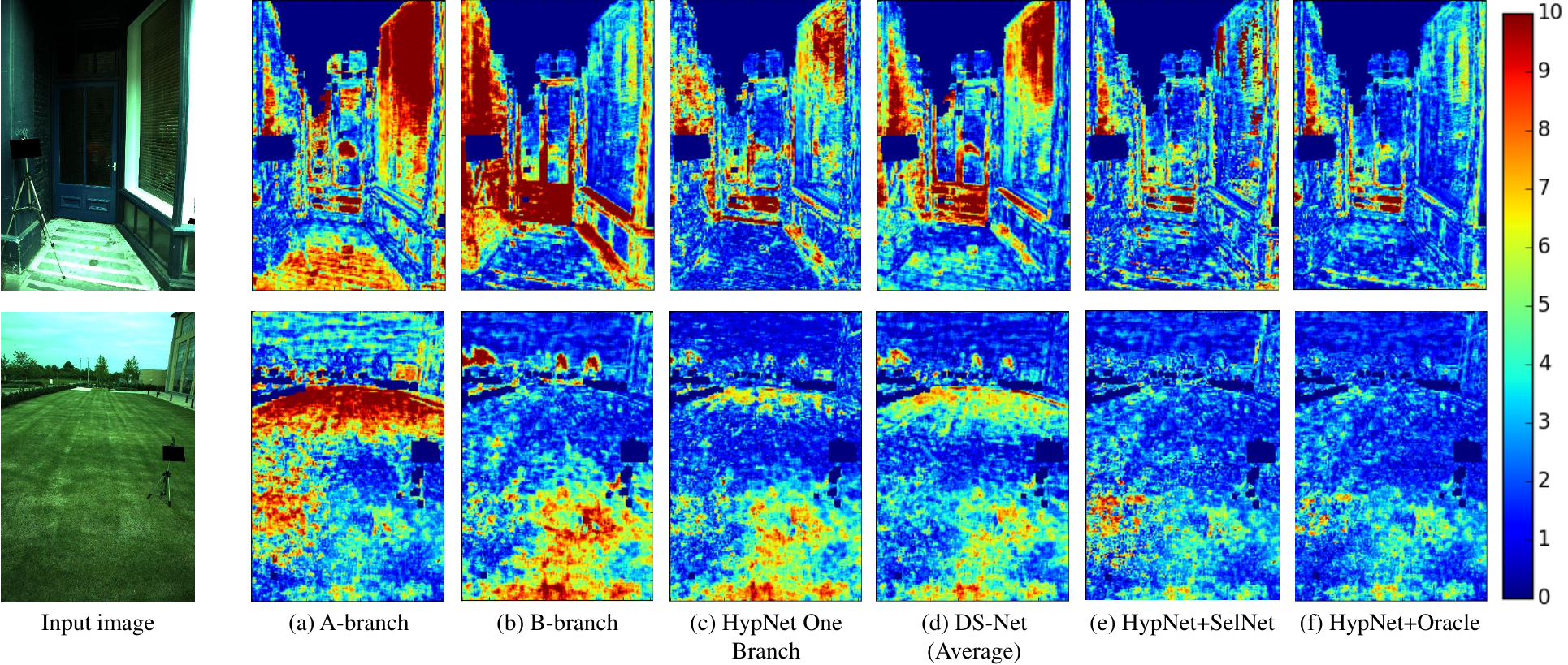

Fig. 3. Comparison of different selection schemes.

Local to Global Estimation

Our DS-Net can predict patch-wise local illumination for an image. For the global-illuminant setting, we simply perform a median pooling on all the local illuminant estimates of the image.

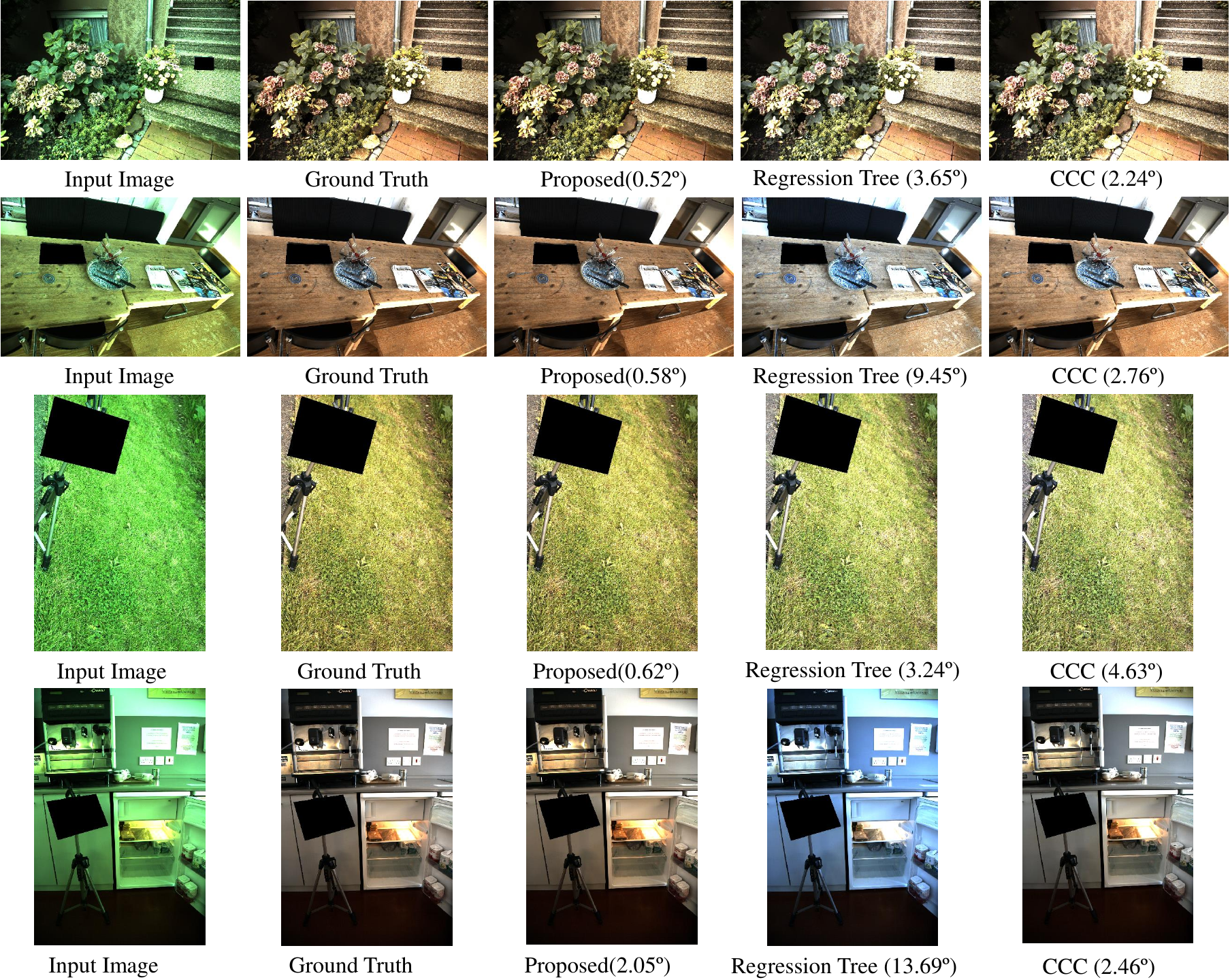

Fig. 4. Restored images ffrom the Color Checker dataset [4,5] using illuminants estimated from three different methods including the proposed DS-Net, Regression Tree [1] and CCC[3]. The angular error is provided at the bottom of each image.

References

[1] Cheng, D., Price, B., Cohen, S., & Brown, M. S. (2015). Effective learning-based illuminant estimation using simple features. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. 1000-1008)

[2] Bianco, S., Cusano, C., & Schettini, R. (2015). Single and Multiple Illuminant Estimation Using Convolutional Neural Networks. arXiv preprint arXiv:1508.00998

[3] Barron, J. T. (2015). Convolutional Color Constancy. In Proceedings of the IEEE International Conference on Computer Vision (pp. 379-387)

[4] Gehler, P. V., Rother, C., Blake, A., Minka, T., & Sharp, T. (2008, June). Bayesian color constancy revisited. In Computer Vision and Pattern Recognition, 2008. CVPR 2008. IEEE Conference on (pp. 1-8). IEEE

[5] Lilong Shi and Brian Funt, "Re-processed Version of the Gehler Color Constancy Dataset of 568 Images," accessed from http://www.cs.sfu.ca/~colour/data/

Citation

@inproceedings{shi2016deep,

title={Deep Specialized Network for Illuminant Estimation},

author={Shi, Wu and Loy, Chen Change and Tang, Xiaoou},

booktitle={European Conference on Computer Vision},

pages={371--387},

year={2016},

organization={Springer}

}