Details

CelebA-Dialog is a large-scale visual-language face dataset with the following features. Facial images are annotated with rich fine-grained labels, which classify one attribute into multiple degrees according to its semantic meaning. Accompanied with each image, there are textual captions describing the attributes and a user editing request sample.

CelebA-Dialog has:10,177 number of identities,

202,599 number of face images, and

5 fine-grained attributes annotations per image: Bangs, Eyeglasses, Beard, Smiling, and Age,

Textual captions and a user editing request per image.

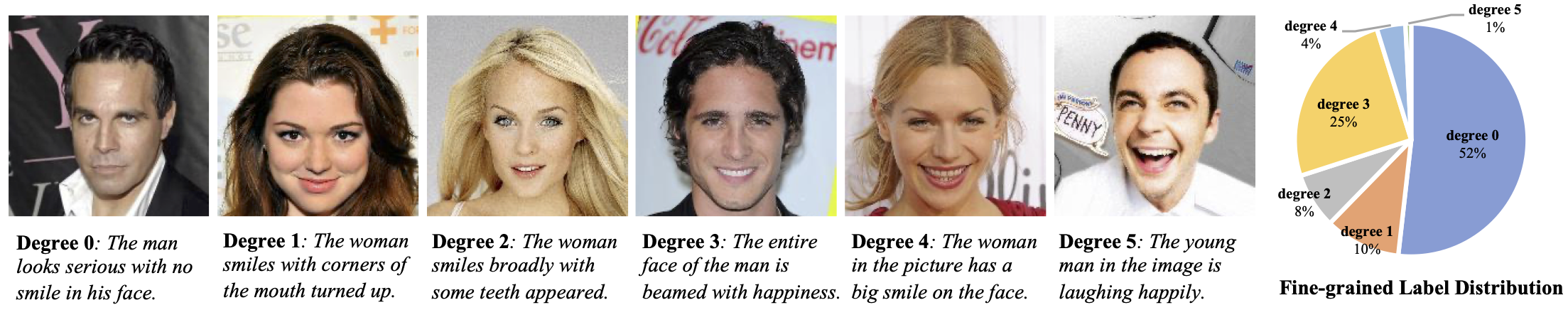

Sample Images

We show example images and annotations for the Smiling attribute. Below the images are the attribute degrees and the corresponding textual descriptions. We also show the fine-grained label distribution of the Smiling attribute.

Download

For more details of the dataset, please refer to the paper "Talk-to-Edit: Fine-Grained Facial Editing via Dialog".

Agreement

- The CelebA-Dialog dataset is available for non-commercial research purposes only.

- You agree not to reproduce, duplicate, copy, sell, trade, resell or exploit for any commercial purposes, any portion of the images and any portion of derived data.

- You agree not to further copy, publish or distribute any portion of the CelebA-Dialog dataset. Except, for internal use at a single site within the same organization it is allowed to make copies of the dataset.

Citation

@inproceedings{jiang2021talkedit,

title = {Talk-to-Edit: Fine-Grained Facial Editing via Dialog},

author = {Jiang, Yuming and Huang, Ziqi and Pan, Xingang and Loy, Chen Change and Liu, Ziwei},

booktitle = {Proceedings of International Conference on Computer Vision (ICCV)},

year={2021}

}